Let’s be pleasantly blunt for a second: we’ve officially entered the era where brands say "personalization" when they really mean "we tracked you six ways from Sunday and hope you don’t notice."

By 2026, every CMO, enrollment leader, and government communications team has heard the same pitch: AI will make every experience smarter, faster, and more relevant. In theory, great. In practice, I keep seeing the same ugly irony: brands are using AI to "personalize" experiences while simultaneously losing the trust of the very people they’re trying to reach through invasive, unmanaged tracking.

That’s the rant.

We keep obsessing over outputs: the chatbot, the recommendation engine, the predictive score, the auto-generated email copy. Meanwhile, the inputs are a mess. Consent is fuzzy. Governance is weak. Old identifiers stick around forever. And then everyone acts shocked when the machine starts producing sketchy results.

Your AI is only as honest as your data. And if the data was collected in ways your customer would find creepy, manipulative, or flat-out irresponsible, the AI layer doesn’t magically clean that up. It scales the problem.

The Philosophy: Permission-Based Analytics

Here’s the shift I think smart organizations need to make: the next era of analytical clarity is not about collecting more data. It’s about earning better permissions.

That matters even more for government agencies, higher ed institutions, and large B2B organizations where privacy expectations are higher, internal complexity is real, and one bad data practice can trigger legal, reputational, and operational headaches all at once.

Permission-Based Analytics is not just a prettier phrase for a cookie banner. It’s a different operating model.

- You collect less, but with more intention.

- You ask more clearly, so the data you keep is higher quality.

- You design measurement around trust, not surveillance.

That’s how you get to actual analytical clarity.

Not by hoarding every click and stuffing it into a black box.

Not by letting vendors build behavioral dossiers you can’t explain.

And definitely not by feeding AI systems a pile of ambiguous, stale, questionably sourced data and calling it innovation.

When permissions are clean, your data gets cleaner. When your data gets cleaner, your models get more useful. And when your models get more useful, analytics becomes what it should have been all along: a customer experience launching pad, not a digital dragnet.

The Shift: From "Data Hoarding" to "Analytical Stewardship"

We need to stop talking about "Marketing Analytics" as a way to spy on people and start talking about Analytical Stewardship.

The "Maturity Gap" I see in most organizations stems from a lack of respect for the user's sovereignty. We’ve spent years trying to find workarounds for the decline of third-party cookies. We’ve tried to "patch" our tracking.

But the winners in 2026 are moving toward Permission-Based Analytics.

This isn't just about putting a cookie banner on your site and calling it a day. It’s about a fundamental shift in strategy:

- Value-First Collection: You only ask for data when you’ve provided enough value to justify the ask.

- Zero-Knowledge Goals: You aim for high-level attribution without needing to know the user's blood type and childhood pet's name.

- Data Sovereignty: You ensure the client: not the vendor: owns every single data point.

When you have a "clean" data set built on transparency, your AI models actually perform better. High-integrity data sets have less "noise," which means your predictive models are more accurate and your web analytics actually tell a human-readable story.

The Pragmatic Advice: A 3-Step Audit for Your Data Privacy Layer

If you’re worried your AI is making confident decisions on top of sloppy data practices, don’t start with another tool. Start with an audit.

Here’s the three-step gut check I’d recommend.

1. Review Your Server-Side Tagging Status

A lot of teams still have no clear answer to a basic question: where is collection actually happening, and who controls it?

If your measurement stack still depends entirely on browser-side scripts and a pile of vendor tags, you probably have more leakage, less control, and weaker governance than you think. Server-side tagging won’t solve every privacy problem, but it does give you a better foundation for control, filtering, and data minimization.

2. Purge Your "Zombie" User IDs

Go look at your CRM, CDP, analytics configuration, and audience systems. How many old identifiers are still hanging around with no legitimate business reason to exist?

These are your "zombie" user IDs. They clutter models, distort reporting, and expand your risk surface for no good reason. If someone hasn’t meaningfully engaged in years, or you can’t justify why that identifier still exists, get rid of it. You do not get bonus points for storing stale identity debris.

3. Make Sure Your First-Party Data Is Actually Yours

This is where a lot of organizations fool themselves.

If your "first-party" data only exists inside a vendor platform, if exports are limited, if identity resolution is opaque, or if the logic is trapped in somebody else’s system, that is not real ownership. That is rented access. Your first-party data should be governed by you, stored in environments you control, and understandable without needing a vendor translator.

If not, you’re building your AI future on top of a black box.

Why Minimalist Data is Your New Competitive Moat

I’ve spent 20 years helping organizations realize that the system matters more than the tool. Whether you are using HubSpot, Marketo, or a custom-built stack for a federal agency, the software is secondary to the process.

In the era of AI, a "lean" data strategy is a competitive advantage.

- Faster Training: Smaller, cleaner data sets allow AI models to iterate faster.

- Lower Risk: You can't lose what you don't keep.

- Higher Trust: When you tell a customer, "We only track X so we can provide Y," and you actually stick to it, you build a brand moat that no AI chatbot can replicate.

For my clients in Higher Ed and Government, this is where you can actually beat the "big tech" players. You have the opportunity to be the "trusted source." But that trust is fragile. If your "personalization" feels like surveillance, you’ve already lost.

The Engagement Question

I want you to ask yourself a question that I often pose to C-Suite leaders during a technical audit:

"If a customer asked to see every piece of data your AI has learned about them today, would you be proud to show them—or terrified?"

If the answer is "terrified," you don't have a technical problem. You have an integrity problem.

We need to move past the "speeds and feeds" of AI and get back to the fundamentals of marketing analytics. Analytics should be a "customer experience launching pad," not a dragnet.

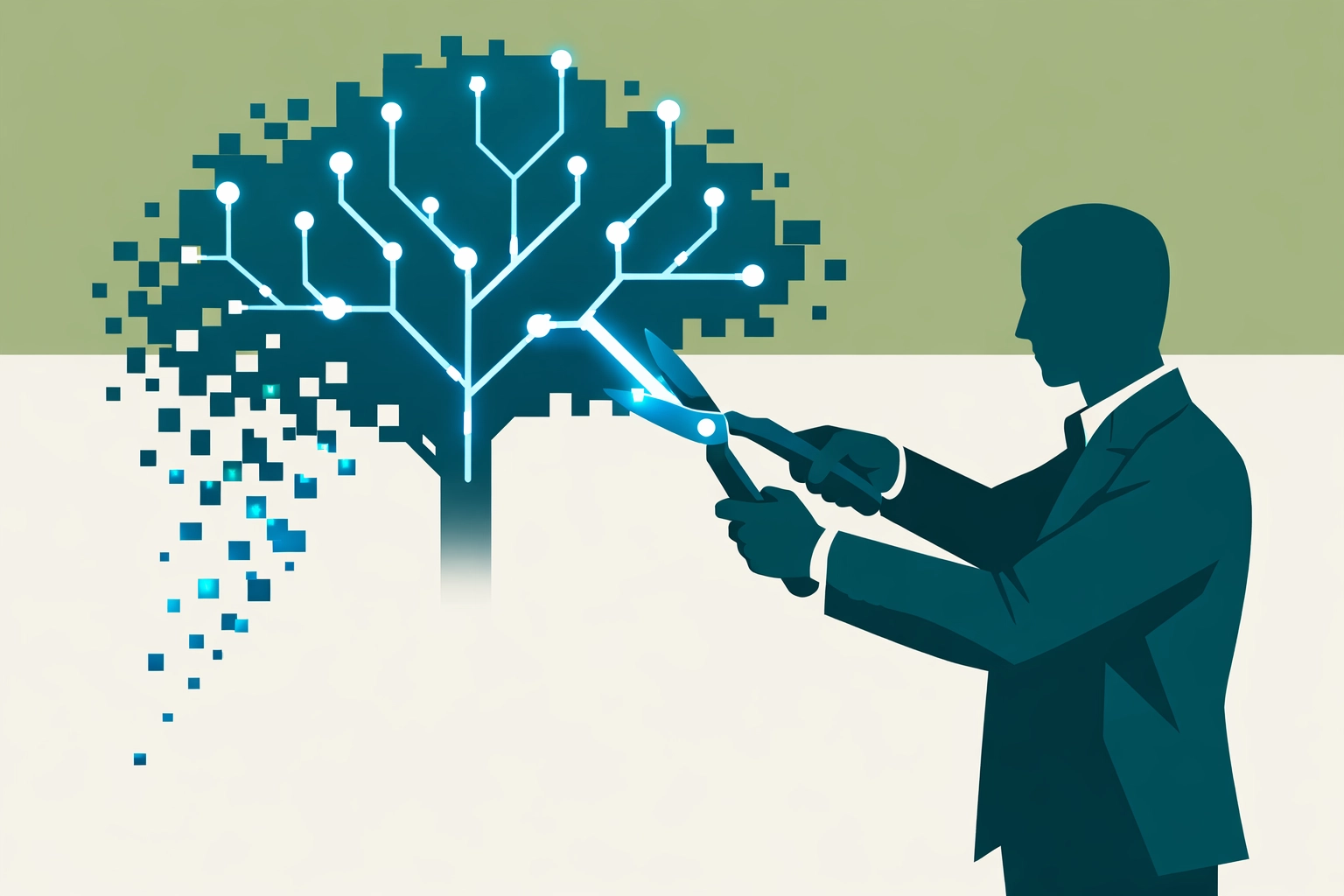

The takeaway for today: Stop hiring "vendors" to dump more data into your systems. Start building "architects" who know how to prune the garden. Your AI: and your brand reputation: depends on it.

Are you ready to move from "Data-Drowning" to "Insight-Driven"? It starts with a clean slate and a commitment to stewardship.

If you’re not sure where your data is living or how your AI is using it, let’s talk. We specialize in helping complex organizations find analytical clarity without the "creepy" factor.

What’s your system for data purging? Do you have one, or are you just buying more cloud storage and hoping for the best? Reply to this or reach out: I’d love to hear how you’re handling the "Creep Paradox" in your neck of the woods.

Stay sharp,

Marcus Sanford

Owner, MM Sanford

Marketing Analytics & SEO Consultant